🔥 Microservices Interview Questions for AI / ML Engineers

- Mohammed Vasim

- Microservices, MLOps

- 03 Feb, 2026

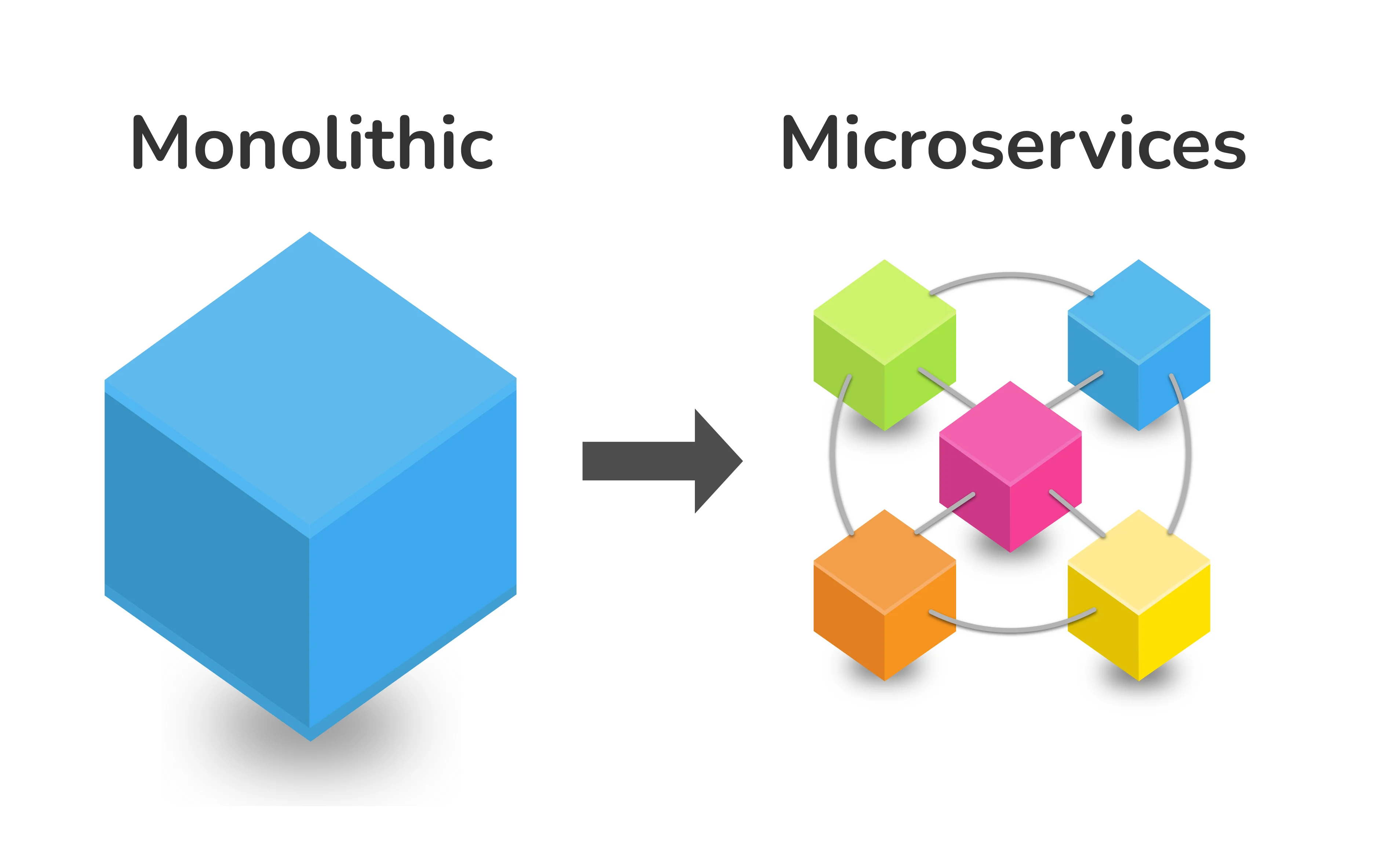

As AI systems move from research notebooks to real-world products, deploying them reliably at scale has become a systems engineering challenge—not just a machine learning one. In production, AI models live inside distributed systems where latency, scalability, resilience, and operational simplicity matter as much as accuracy.

This blog explores microservices design through the lens of AI and ML systems, not from an MLOps or training perspective, but from a pure distributed-systems and backend architecture standpoint. Each section reframes classic microservices concepts—such as statelessness, communication patterns, scaling, and fault tolerance—under the unique constraints introduced by heavy compute, GPUs, variable latency, and fast-evolving models.

The goal is to help AI engineers, backend developers, and system designers think like production engineers, and to prepare for interviews that test real-world architectural judgment rather than textbook definitions.

Section 1: Service Boundaries & Decomposition

Q1. How do you decide service boundaries when designing AI-based microservices?

I decide service boundaries based on independent change, independent scaling, and independent deployment, not based on technical layers alone.

In AI systems, model inference, preprocessing, orchestration, and business logic often evolve at different speeds. For example, models may be retrained or replaced frequently, while APIs and business workflows remain stable. In such cases, I isolate model inference as its own microservice so that model updates don’t force redeployment of the entire system.

I also evaluate runtime characteristics. If a component has very different CPU, memory, or GPU requirements, it deserves its own service. For instance, heavy inference workloads should not share a service with lightweight request validation or routing logic.

Finally, I validate boundaries by asking: Can this service be owned, deployed, and scaled independently without causing coordination overhead? If the answer is no, the boundary is probably wrong.

Q2. Should each machine learning model be deployed as a separate microservice? Why or why not?

Not necessarily. While separate services provide isolation and independent scaling, they also increase operational complexity, latency, and cost.

I deploy each model as a separate microservice only when models differ in lifecycle, scaling needs, or ownership. For example, a recommendation model and a fraud detection model usually have different traffic patterns, retraining cycles, and performance constraints — so they should be isolated.

However, if multiple models are always executed together — such as in an ensemble or fixed pipeline — separating them into different services may add unnecessary network hops and failure points. In such cases, grouping them into a single service can improve latency and simplify operations.

The decision is not about “one model = one service”, but about minimizing coupling while avoiding over-engineering.

Q3. Would you separate data preprocessing and inference into different microservices?

I separate preprocessing from inference only when preprocessing has a strong reason to exist independently — such as reuse across multiple models, heavy computation, or ownership by a different team.

For real-time inference, splitting preprocessing and inference often hurts performance because it adds network latency and additional failure modes. In these cases, I prefer to keep preprocessing inside the inference service so the entire prediction path is optimized and observable as a single unit.

However, for batch inference or shared feature pipelines, separating preprocessing can make sense. For example, a feature engineering service that prepares standardized inputs for multiple downstream models can reduce duplication and improve consistency.

So the rule I follow is: optimize for latency and simplicity first, reuse second.

Q4. What are common mistakes teams make when defining AI microservice boundaries?

The most common mistake is over-decomposition. Teams often split systems too early — creating separate services for tokenization, normalization, inference, post-processing, and logging — without real operational justification.

In AI systems, each additional service introduces latency, network failures, and debugging complexity. This is especially harmful for real-time use cases where inference is already expensive.

Another mistake is defining boundaries based purely on code structure instead of runtime behavior. For example, separating services that always scale together or always fail together provides little benefit and increases overhead.

Strong designs start coarse-grained and evolve toward finer boundaries only when scaling, ownership, or deployment demands it.

Q5. How do you validate that your service boundaries are correct in production?

I validate service boundaries by observing deployment friction, scaling behavior, and incident patterns.

If small model updates require coordinated redeployment across many services, the boundaries are too tight. If one service frequently becomes a bottleneck or requires different scaling policies, it may need further isolation.

I also look at operational signals: frequent cross-service failures, excessive retries, or high inter-service latency usually indicate poor boundaries.

In practice, service boundaries are hypotheses. Production metrics, incident reports, and team workflows provide feedback. Good boundaries reduce coordination costs and improve system resilience over time.

Key Interview Signal (What the Interviewer Is Listening For)

When you answer these questions well, the interviewer hears:

- You understand distributed systems, not just ML

- You design for operational reality, not diagrams

- You can balance latency, scale, and complexity

- You avoid dogma (“everything must be a microservice”)

Section 2: Statelessness vs Statefulness in AI Microservices

Q1. Are AI / ML inference microservices stateless? Explain clearly.

From a microservices contract perspective, AI inference services should be stateless: each request must contain all the information required to generate a response, and no client-visible state should be stored inside the service.

However, from a runtime perspective, inference services maintain internal state such as loaded models, tokenizers, GPU memory allocations, and sometimes compiled graphs. This state is purely performance-related, not business-critical.

The key distinction is this:

- Client state must never live inside the service

- Execution state is acceptable as long as it is rebuildable

This design allows horizontal scaling and safe restarts without affecting correctness.

Q2. Why is statelessness especially important for scaling AI services?

Stateless services can be replicated freely, which is critical for AI systems because inference workloads are often bursty and unpredictable.

If user or request-specific state is stored inside the service, scaling becomes difficult: requests must be routed to specific instances, failures cause data loss, and autoscaling breaks.

By externalizing state — such as conversation history, embeddings, or user context — AI services can scale horizontally without coordination. This also allows load balancers to distribute requests evenly and replace unhealthy instances without special handling.

In short, statelessness is what makes AI services elastic and fault-tolerant, despite their heavy compute nature.

Q3. How do you handle conversational state in LLM-based microservices while keeping them stateless?

Conversational state must be stored outside the inference service, typically in a database, cache, or vector store. The inference service receives the required context as part of each request.

For example, the client or an orchestration layer retrieves conversation history, summarizes or selects relevant context, and sends it along with the prompt. The LLM service itself remains unaware of conversation identity.

This approach allows:

- Horizontal scaling

- Safe restarts

- Multiple models to serve the same conversation

The inference service becomes a pure function of input → output, which is ideal for distributed systems.

Q4. When is it acceptable for an AI microservice to maintain internal state?

Internal state is acceptable when it is non-critical, rebuildable, and purely performance-related.

Examples include:

- Loaded model weights in memory

- Tokenizer caches

- Embedding or inference result caches

- GPU memory allocations

This state improves performance but must not affect correctness. If an instance crashes, the system should recover simply by reloading the model and continuing.

Any state that affects business logic, user behavior, or correctness — such as session data or user preferences — must be externalized.

Q5. What problems arise if AI services become stateful in practice?

Stateful AI services introduce several operational risks:

- Scaling limitations: instances can’t be replaced freely

- Sticky routing: load balancers must route requests to specific instances

- Failure amplification: crashes cause state loss or inconsistent behavior

- Complex recovery logic: state must be synchronized or replicated

These issues are especially dangerous in AI systems, where services are already heavy and slower to start. Stateful designs turn routine failures into major incidents.

As a result, experienced teams aggressively protect statelessness at the service boundary.

Key Interview Signal

When you answer this section well, the interviewer understands that you:

- Distinguish logical statelessness vs runtime state

- Know how to design scalable LLM and inference services

- Understand failure modes in distributed AI systems

- Think in terms of contracts, not implementation details

Section 3: Communication Patterns (Synchronous vs Asynchronous)

Q1. When should AI microservices use synchronous communication?

Synchronous communication is appropriate when low latency and immediate response are business requirements and inference time is predictable.

Typical examples include:

- Chatbots

- Real-time recommendations

- Fraud or risk scoring in request paths

In these cases, REST or gRPC works well, but only if strict timeouts, circuit breakers, and concurrency limits are enforced. AI inference is expensive, so synchronous calls must be carefully bounded to avoid cascading failures.

I treat synchronous inference as a hot path that must be optimized, protected, and monitored aggressively.

Q2. When is asynchronous communication the better choice for AI systems?

Asynchronous communication is better when inference is:

- Long-running

- Resource-intensive

- Non-interactive

- Batch-oriented

Examples include batch scoring, document processing, video analysis, or large LLM prompts. In these cases, using message queues or event streams decouples request submission from execution.

Async patterns prevent API timeouts, absorb traffic spikes, and allow better control over resource usage. They also enable retries and backpressure without blocking upstream services.

For heavy AI workloads, async is often the default, not the exception.

Q3. Why do synchronous patterns become risky with AI microservices?

AI inference time is inherently variable. Even with the same model, latency can fluctuate based on input size, hardware contention, or model warm-up state.

In synchronous systems, this variability propagates upstream, causing:

- Thread exhaustion

- Request pile-ups

- Cascading timeouts

- Full system outages

This violates a core microservices assumption: services should respond quickly and predictably. That’s why AI services require stricter timeouts, load shedding, or async designs to remain resilient.

Q4. How do message queues improve resilience in AI microservices?

Message queues act as a buffer between demand and compute capacity. They allow systems to accept work even when inference services are temporarily overloaded.

Queues also enable:

- Controlled concurrency

- Retry policies without blocking clients

- Backpressure signaling

- Graceful degradation under load

For AI workloads, this is critical because compute resources — especially GPUs — are finite and expensive. Queues help ensure that overload degrades throughput, not availability.

Q5. How do you avoid tight coupling when AI services communicate synchronously?

I enforce strict service contracts and treat inference services as black boxes. Upstream services should depend only on stable request/response schemas, never on model behavior or performance assumptions.

Additionally, I place an API gateway or orchestration layer between clients and AI services. This layer handles retries, fallbacks, timeouts, and routing — insulating clients from AI-specific instability.

Even when synchronous calls are required, control logic must live outside the AI service to keep coupling low.

Key Interview Signal

Strong answers here show that you:

- Understand latency variance in AI systems

- Know when sync breaks down at scale

- Use async patterns intentionally, not dogmatically

- Design for resilience under heavy compute

Interviewers are listening for phrases like:

“bounded latency”, “backpressure”, “compute variability”, “hot vs cold paths”

Section 4: Latency & Performance in AI Microservices

Q1. Why is latency a bigger challenge in AI microservices compared to traditional microservices?

In traditional microservices, most latency comes from network calls and I/O, which are relatively predictable. In AI microservices, inference time dominates and is highly variable.

Model execution depends on input size, hardware contention, memory availability, and warm-up state. This means latency distributions are wider, with long tails that directly impact user experience.

Because inference is expensive, even small architectural inefficiencies — extra hops, serialization overhead, cold starts — become significant. As a result, AI microservices must be designed with latency as a first-class constraint, not an afterthought.

Q2. What are the main sources of latency in an AI inference microservice?

Latency typically comes from multiple layers:

- Request handling – serialization, validation, authentication

- Model loading – especially during cold starts

- Inference execution – CPU/GPU computation

- Pre/post-processing – tokenization, normalization, decoding

- Network hops – especially if services are over-decomposed

In many systems, inference itself is not the only bottleneck. Poor service boundaries or unnecessary cross-service calls often add more latency than the model computation.

Q3. How do you reduce inference latency without changing the ML model itself?

I focus on system-level optimizations:

- Keep inference services warm by maintaining minimum replicas

- Batch compatible requests to improve hardware utilization

- Cache frequent or deterministic requests

- Use efficient protocols like gRPC instead of REST where possible

- Isolate inference services from unrelated workloads to avoid resource contention

These techniques often yield larger latency improvements than model-level changes, especially in production environments.

Q4. Why are cold starts particularly problematic for AI microservices?

Cold starts in AI services involve loading large model artifacts, initializing runtimes, and allocating GPU memory — all of which can take seconds or even minutes.

If autoscaling scales services down to zero or spins up instances reactively, users experience severe latency spikes. This is unacceptable for real-time applications.

That’s why AI microservices often require warm pools, pre-provisioned capacity, or minimum replica settings. The cost trade-off is intentional: predictable latency is prioritized over aggressive cost savings.

Q5. How do you balance latency, throughput, and cost in AI microservices?

I separate workloads into hot paths and cold paths.

Hot paths — such as user-facing inference — prioritize low latency and run on always-on services with tight SLAs. Cold paths — such as batch scoring or analytics — optimize for throughput and cost using async execution and elastic scaling.

This separation allows the system to meet user expectations without over-provisioning expensive resources across all workloads. Performance becomes a product decision, not just a technical one.

Key Interview Signal

If you answer this section well, interviewers hear that you:

- Understand latency as a distribution, not a single number

- Design systems around long-tail behavior

- Optimize architecture before touching models

- Can explain cost–performance trade-offs clearly

Section 5: Scaling Strategy for AI Microservices

Q1. Can AI / ML inference microservices scale horizontally like normal microservices?

Yes, AI inference services can scale horizontally, but with important constraints that don’t exist in typical stateless APIs.

Horizontal scaling works only if each instance is self-sufficient — meaning the model is preloaded, dependencies are available, and hardware resources (CPU/GPU) are properly allocated. Unlike normal services, spinning up a new AI instance is expensive due to model loading time and hardware initialization.

So while horizontal scaling is conceptually possible, it must be planned and proactive, not purely reactive.

Q2. Why is autoscaling harder for AI microservices than for traditional APIs?

Traditional autoscaling relies on signals like CPU or request count, which correlate well with load. In AI systems, these signals are often misleading.

Inference latency depends on model complexity, input size, batching behavior, and GPU contention — not just request volume. Additionally, scale-up actions may take too long due to cold starts, making reactive autoscaling ineffective.

As a result, AI autoscaling requires custom metrics such as queue depth, GPU utilization, or end-to-end latency, combined with pre-warmed capacity.

Q3. How do you handle sudden traffic spikes for AI inference services?

I assume traffic spikes will happen and design for them upfront.

Common strategies include:

- Maintaining warm replicas at all times

- Introducing request queues to absorb bursts

- Applying rate limiting and load shedding

- Gracefully degrading responses when capacity is exceeded

The goal is not to serve every request instantly, but to prevent system collapse. In AI systems, controlled degradation is far better than global failure.

Q4. When does vertical scaling make more sense than horizontal scaling for AI services?

Vertical scaling makes sense when models are large, memory-intensive, or GPU-bound, and cannot be efficiently replicated across many nodes.

For example, very large LLMs may run best on a small number of high-memory GPUs rather than many smaller instances. In such cases, scaling up hardware provides better performance and simpler operations than scaling out.

This is a deviation from typical microservices philosophy, but it reflects the realities of AI workloads.

Q5. How do you decide the right scaling strategy for an AI microservice?

I evaluate:

- Model size and load time

- Latency requirements

- Traffic variability

- Hardware constraints

- Cost tolerance

If the service is user-facing and latency-sensitive, I prioritize warm horizontal scaling. If it’s batch-oriented or compute-heavy, I lean toward async processing and vertical scaling.

Scaling decisions in AI systems are not generic — they are workload-specific and cost-driven.

Key Interview Signal

Strong answers here show that you:

- Understand why AI breaks naïve autoscaling assumptions

- Know how to design for bursty, heavy workloads

- Can reason about GPUs, cold starts, and cost

- Treat scaling as an architectural decision, not a configuration tweak

Section 6: Service Discovery & Routing in AI Microservices

Q1. How does service discovery work for AI microservices, and how is it different from normal services?

At a basic level, AI microservices use the same discovery mechanisms as other services — DNS, service registries, or orchestration platforms. The difference is that discovery is not only about finding an endpoint, but about finding the right capability.

For AI systems, services may differ by:

- Model type

- Model version

- Hardware (CPU vs GPU)

- Performance characteristics

So discovery often becomes capability-based routing, where requests are routed to services that can actually satisfy them, not just any healthy instance.

Q2. How do you route requests to different ML models within a microservices architecture?

I introduce a routing layer — usually an API Gateway or orchestration service — that examines request metadata and decides which model service should handle the request.

Routing decisions may be based on task type, tenant, experiment group, or SLA requirements. This avoids hardcoding model logic into clients and allows models to evolve independently.

This pattern keeps clients simple and makes the system adaptable to change.

Q3. Why is an API Gateway especially important for AI microservices?

AI services are unstable by nature — latency varies, models change, and capacity is limited. An API Gateway absorbs this instability.

It handles authentication, rate limiting, retries, timeouts, fallbacks, and version routing. Without a gateway, every client would need to implement AI-specific resilience logic, leading to duplication and inconsistency.

In AI systems, the gateway is not optional infrastructure — it is a stability layer.

Q4. How do you support multiple model versions running in parallel?

Multiple versions run as separate services or deployments, registered independently. Routing rules decide which version receives traffic.

This enables:

- Gradual rollouts

- Canary deployments

- A/B testing

- Fast rollback

Clients are unaware of model versions unless explicitly required. From their perspective, the API remains stable while models evolve behind it.

Q5. What problems arise if clients directly call AI microservices without a routing layer?

Direct calls tightly couple clients to deployment details such as service names, versions, and capacity limits. Any model change forces client updates, which slows iteration and increases risk.

It also pushes resilience logic — retries, fallbacks, throttling — into every client, which is error-prone and inconsistent.

A routing layer centralizes these concerns, reducing coupling and making the system safer to operate at scale.

Key Interview Signal

When interviewers hear these answers, they know you:

- Understand capability-aware routing

- Think beyond DNS and load balancers

- Design for multi-model, multi-version systems

- Use gateways as architectural primitives, not just infra

Section 7: Versioning & Compatibility in AI Microservices

Q1. How do you version AI microservices when models change frequently?

I version APIs, not models. Clients integrate with a stable contract, while models evolve behind that interface.

Model changes that don’t affect input/output semantics — such as retraining, fine-tuning, or performance improvements — should not trigger API version changes. Only breaking schema or behavioral changes justify a new API version.

Internally, models are versioned independently and deployed separately, but clients remain insulated from that complexity.

Q2. Can multiple versions of an AI model run in parallel? Why is this important?

Yes, and they often should. Running multiple versions in parallel allows controlled rollouts, A/B testing, and rapid rollback if issues arise.

This is especially important in AI systems because model behavior can change in subtle, non-obvious ways. Parallel versions let teams compare real-world behavior safely before committing fully.

From a microservices perspective, parallel versions reduce deployment risk and increase system resilience.

Q3. How do you expose multiple model versions without breaking clients?

I keep the external API stable and route requests internally based on versioning rules. Clients are unaware of which model version serves their request unless explicitly required.

If clients need control, versioning can be expressed via headers or optional parameters, not by changing endpoints. This avoids client-side branching logic and preserves backward compatibility.

Breaking changes always require a new API version, but that decision is deliberate and infrequent.

Q4. What are common mistakes teams make with versioning AI services?

One common mistake is versioning on every model change, which forces unnecessary client updates and slows development.

Another mistake is mixing API versioning with model experimentation logic — for example, exposing experimental behavior directly to production clients.

Experienced teams treat versioning as a contract management problem, not a model management problem.

Q5. How do you decide when a model change is a breaking change?

A model change is breaking if it changes input requirements, output structure, or semantic expectations relied upon by clients.

For example, adding new fields to a response is usually safe, but changing output types or removing fields is breaking. Changes in accuracy or confidence distribution are not breaking from an API perspective, but they may require business validation.

The key is to separate technical compatibility from business acceptance.

Key Interview Signal

Strong answers here demonstrate that you:

- Understand contracts vs implementations

- Protect client stability while enabling rapid iteration

- Can manage risk in AI deployments

- Think like a platform engineer, not just a model developer

Section 8: Fault Tolerance & Resilience in AI Microservices

Q1. What are the most common failure modes in AI microservices?

AI microservices fail differently from traditional services. Common failure modes include:

- Model load failures due to corrupted artifacts or incompatible runtimes

- GPU exhaustion or memory fragmentation

- Excessive latency caused by input size or batching issues

- Silent failures where outputs are produced but are invalid or incomplete

These failures are often slow and partial, not clean crashes. This makes detection and recovery more difficult and requires stronger resilience mechanisms.

Q2. How do retries differ for AI inference calls compared to normal API calls?

Retries must be conservative. Inference is expensive, so blind retries can amplify overload and worsen outages.

I use short timeouts, limited retries, and circuit breakers. Retries are applied only for transient failures, such as network issues — not for compute saturation or model errors.

For AI systems, it’s often better to fail fast and degrade gracefully than to retry aggressively.

Q3. How do you design graceful degradation for AI microservices?

Graceful degradation means the system continues to provide useful output even when full inference is unavailable.

This can include:

- Falling back to cached or previously computed results

- Using simpler or smaller models

- Switching to rule-based or heuristic logic

The goal is to maintain functionality and user trust, even if accuracy or richness is temporarily reduced.

Q4. How do circuit breakers help in AI microservices?

Circuit breakers prevent failing or overloaded AI services from cascading failures across the system.

Because inference is slow and resource-heavy, allowing unlimited calls during failure can exhaust resources quickly. Circuit breakers stop traffic early, protect upstream services, and give the AI service time to recover.

In AI systems, circuit breakers are not optional — they are essential safeguards.

Q5. How do you test fault tolerance in AI microservices?

I test fault tolerance by intentionally inducing failures:

- Killing inference pods

- Forcing GPU exhaustion

- Injecting latency or timeouts

- Corrupting model artifacts in staging

Observing how the system behaves under stress reveals weaknesses that unit tests cannot catch. For AI systems, chaos testing is especially valuable because failures are often non-deterministic.

Key Interview Signal

Strong answers here show that you:

- Understand real AI failure modes

- Design for slow, partial failures

- Know when not to retry

- Prioritize system stability over perfect predictions

Section 9: Observability in AI Microservices (Microservices View)

Q1. What metrics are most important for monitoring AI microservices?

The most important metrics are system-level, not model-level:

- End-to-end latency (including long-tail percentiles)

- Error rate and timeout rate

- Throughput and request concurrency

- Queue depth and backlog

- CPU, memory, and GPU utilization

These metrics directly reflect system health and user experience. Model accuracy metrics are important, but they don’t help when the service is slow or unavailable.

Q2. Why is observability harder for AI microservices than traditional services?

AI failures are often gradual and non-binary. Instead of crashing, services may become slower, consume more memory, or produce partial results.

Inference latency also has high variance, making averages misleading. Without percentile-based metrics and correlation across services, issues go unnoticed until users complain.

This makes strong observability essential for AI systems.

Q3. How should logging be designed for AI inference services?

Logs should capture request metadata and execution context, not raw inputs or outputs.

Important logging includes:

- Request IDs for tracing

- Model version and hardware used

- Inference duration

- Error and timeout reasons

Logs must be structured and privacy-aware. Logging full prompts or outputs can be expensive, insecure, and unnecessary.

Q4. How do distributed traces help in AI microservices?

Distributed tracing shows where time is actually spent — gateway, preprocessing, inference, or downstream calls.

In AI systems, this is crucial because inference often dominates latency, but not always. Traces help identify architectural inefficiencies, such as unnecessary hops or misconfigured retries.

Tracing turns performance debugging from guesswork into evidence-based analysis.

Q5. How do you detect performance degradation before it becomes an outage?

I monitor latency percentiles, queue growth, and resource saturation trends rather than waiting for errors.

Gradual increases in P95 or P99 latency often indicate impending failures, such as GPU contention or memory leaks. Alerting on these early signals allows corrective action before users are impacted.

Proactive observability is the difference between firefighting and operating calmly.

Key Interview Signal

Strong answers here show that you:

- Monitor what actually affects users

- Understand long-tail latency behavior

- Design logs and traces intentionally

- Can detect issues before outages occur.

Section 10: Architectural Trade-offs & When NOT to Use Microservices (AI Context)

Q1. When would you avoid using microservices for AI systems?

I avoid microservices when the system is early-stage, research-heavy, or rapidly evolving. In such cases, requirements change frequently and service boundaries are unclear, making microservices an unnecessary tax.

For AI systems especially, microservices add latency, operational overhead, and debugging complexity. If the workload is single-model, low-traffic, or owned by a small team, a well-structured monolith is often faster to build and easier to operate.

Microservices should solve scaling or organizational problems — not create them.

Q2. What microservices assumptions break in AI systems?

Several assumptions break:

- Fast startup → AI services have heavy cold starts

- Cheap horizontal scaling → GPUs are limited and expensive

- Predictable latency → inference time is variable

These broken assumptions force architectural adjustments such as warm pools, async processing, and conservative autoscaling. Treating AI services like normal APIs leads to instability.

Q3. How do you decide between a monolith and microservices for AI workloads?

I evaluate:

- Team size and ownership boundaries

- Traffic patterns and scalability needs

- Latency sensitivity

- Operational maturity

If there is no clear need for independent scaling or deployment, I start with a monolith and evolve toward microservices as constraints emerge. Premature microservices are harder to undo than premature monoliths.

Q4. What defines a well-designed AI microservice?

A well-designed AI microservice has:

- A clear, stable responsibility

- A simple, versioned API

- Predictable latency behavior

- Strong observability and failure isolation

It hides model complexity behind a contract and behaves like a reliable system component, not an experimental artifact.

Q5. What is the most important mindset shift when designing AI microservices?

The most important shift is moving from model-centric thinking to system-centric thinking.

Success is not defined by accuracy alone, but by reliability, latency, scalability, and user experience. Models are replaceable; systems are not.

Strong AI engineers design systems that make models safe, usable, and operable in the real world.

Final Interview Takeaway

If you remember one sentence, remember this:

AI microservices are not about ML — they’re about operating heavy, unreliable compute safely at scale.

You’ve now covered:

- Service boundaries

- Statelessness

- Communication

- Latency

- Scaling

- Routing

- Versioning

- Fault tolerance

- Observability

- Architectural judgment

Designing AI systems as microservices is not about following patterns blindly—it is about understanding where traditional microservices assumptions break and adapting architecture accordingly. AI workloads challenge core ideas such as fast startup, cheap horizontal scaling, and predictable latency, forcing engineers to rethink service boundaries, communication models, and resilience strategies.

Strong AI microservice architectures prioritize system stability over model novelty, operability over abstraction purity, and clear contracts over experimental flexibility. Models will change, improve, and be replaced—but well-designed systems endure.

Whether preparing for interviews or building production platforms, the key takeaway is simple: successful AI engineering is fundamentally distributed systems engineering with heavier constraints.

References & Further Reading

Books

- Designing Data-Intensive Applications — Martin Kleppmann

- Building Microservices — Sam Newman

- Designing Machine Learning Systems — Chip Huyen

- AI Engineering — Chip Huyen

- Building Large Language Models from Scratch — Sebastian Raschka

- The Hundred-Page Machine Learning Book — Andriy Burkov

Blogs & Articles

- Martin Fowler — Microservices Architecture

- Netflix Tech Blog — Scaling and Resilience Patterns

- Uber Engineering Blog — ML Infrastructure & Platform Design

- Google Cloud Architecture Blog — Distributed Systems & ML Serving

- OpenAI, Anthropic, and Meta engineering blogs (LLM serving insights)

Courses & Learning Resources

- Coursera — Machine Learning Engineering for Production (MLOps)

- Udemy — Advanced Microservices & Distributed Systems courses

- Google SRE Resources — Reliability Engineering fundamentals

- Kubernetes Documentation — Service discovery, autoscaling, and observability

- CNCF Resources — Cloud-native patterns and best practices